LiveBlogPosting Schema: A Powerful Tool for Top Stories Success

Posted by cml63

One of the great things about doing SEO at an agency is that you’re constantly working on different projects you might not have had the opportunity to explore before. Being an SEO agency-side allows you to see such a large variety of sites that it gives you a more holistic perspective on the algorithm, and to work with all kinds of unique problems and implementations.

This year, one of the most interesting projects that we worked on at Go Fish Digital revolved around helping a large media company break into Google’s Top Stories for major single-day events.

When doing competitor research for the project, we discovered that one way many sites appear to be doing this is through use of a schema type called LiveBlogPosting. This sent us down a pathway of fairly deep research into what this structured data type is, how sites are using it, and what impact it might have on Top Stories visibility.

Today, I’d like to share all of the findings we’ve made around this schema type, and draw conclusions about what this means for search moving forward.

Who does this apply to?

With regards to LiveBlogPosting schema, the most relevant types of sites will be sites where getting into Google’s Top Stories is a priority. These sites will generally be publishers that regularly post news coverage. Ideally AMP will already be implemented, as the vast majority of Top Stories URLs are AMP compatible (this is not required, however).

Why non-publisher sites should still care

Even if your site isn’t a publisher eligible for Top Stories results, the content of this article may still provide you with interesting takeaways. While you might not be able to directly implement the structured data at this point, I believe we can use the findings of this article to draw conclusions about where the search engines are potentially headed.

If Google is ranking articles that are updated with regular frequency and even providing rich-features for this content, this might be an indication that Google is trying to incentivize the indexation of more real-time content. This structured data may be an attempt to help Google “fill a gap” that it has in terms of providing real-time results to its users.

While it makes sense that “freshness” ranking factors would apply most to publishers, there could be interesting tests that other non-publishers can perform in order to measure whether there is a positive impact to your site’s content.

What is LiveBlogPosting schema?

The LiveBlogPosting schema type is structured data that allows you to signal to search engines that your content is being updated in real-time. This provides search engines with contextual signals that the page is receiving frequent updates for a certain period of time.

The LiveBlogPosting structured data can be found on schema.org as a subtype of “Article” structured data. The official definition from the site says it is: “A blog post intended to provide a rolling textual coverage of an ongoing event through continuous updates.”

Imagine a columnist watching a football game and creating a blog post about it. With every single play, the columnist updates the blog with what happened and the result of that play. Each time the columnist makes an update, the structured data also updates indicating that a recent addition has been made to the article.

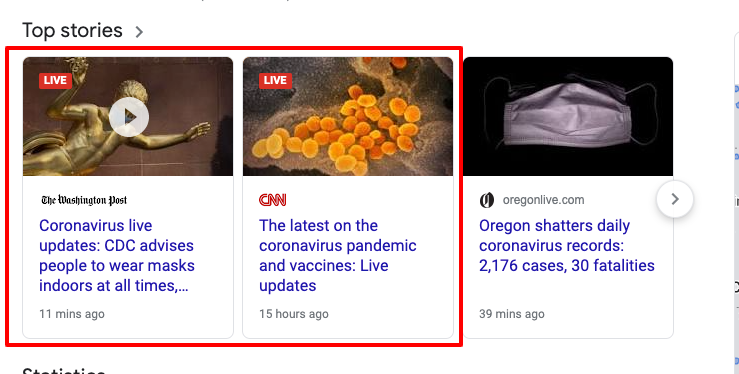

Articles with LiveBlogPosting structured data will often appear in Google’s Top Stories feature. In the top left-hand corner of the thumbnail image, there will be a “Live” indicator to signal to users that live updates are getting made to the page.

Two Top Stories Results With The “Live” Tag

In the image above, you can see an example of two publishers (The Washington Post and CNN) that are implementing LiveBlogPosting schema on their pages for the term “coronavirus”. It’s likely that they’re utilizing this structured data type in order to significantly improve their Top Stories visibility.

Why is this Structured Data important?

So now you might be asking yourself, why is this schema even important? I certainly don’t have the resources available to have an editor continually publish updates to a piece of content throughout the day.

We’ve been monitoring Google’s usage of this structured data specifically for publishers. Stories with this structured data type appear to have significantly improved visibility in the SERPs, and we can see publishers aggressively utilizing it for large events.

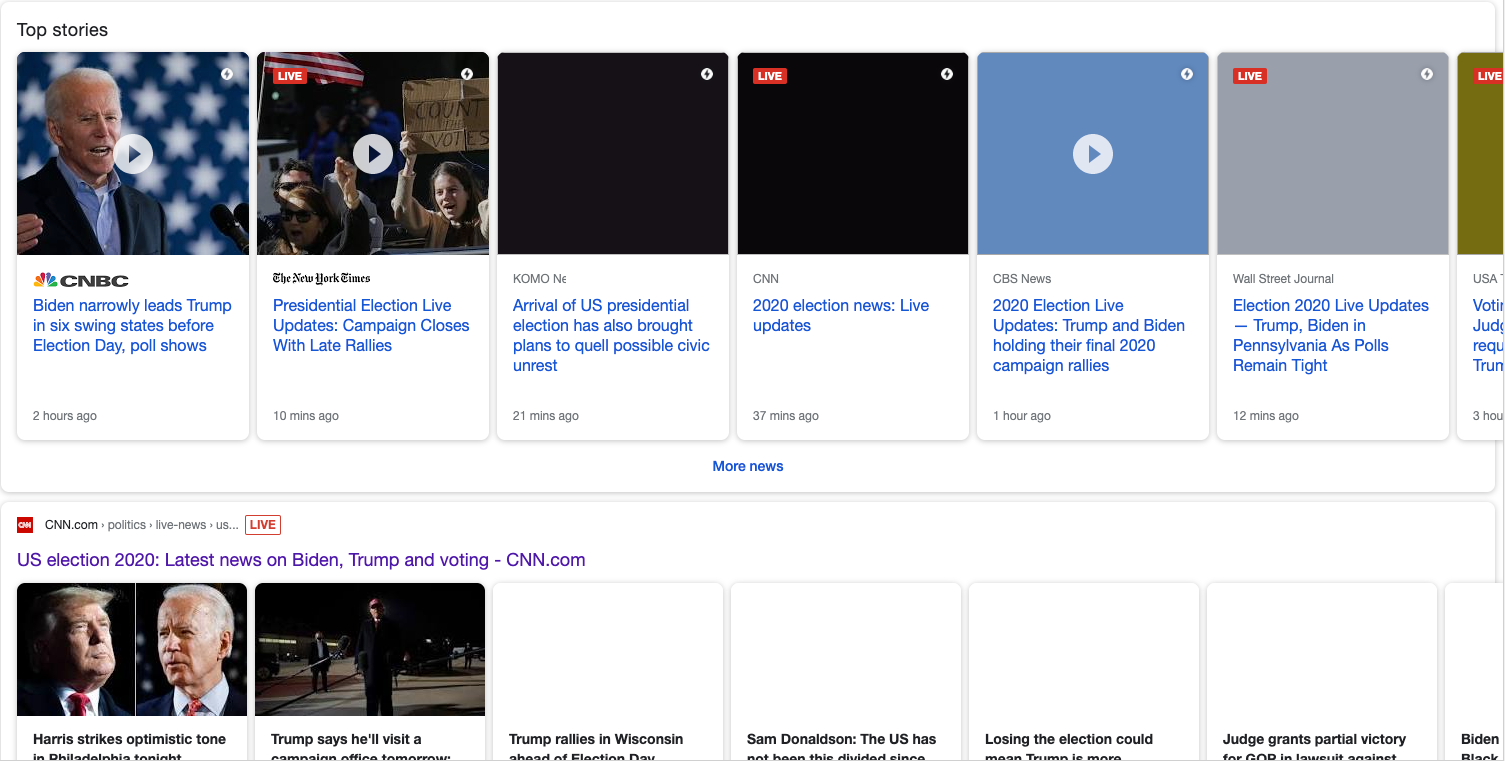

For instance, the below screenshot shows you the mobile SERP for the query “us election” on November 3, 2020. Notice how four of the seven results in the carousel are utilizing LiveBlogPosting schema. Also, beneath this carousel, you can see the same CNN page is getting pulled into the organic results with the “Live” tag next to it:

Now let’s look at the same query for the day after the election, November 4, 2020. We still see that publishers heavily utilize this structured data type. In this result, five of the seven first Top Stories results use this structured data type.

In addition, CNN gets to double dip and claim an additional organic result with the same URL that’s already shown in Top Stories. This is another common result of LiveBlogPosting implementation.

In fact, this type of live blog post was one of CNN’s core strategies for ranking well for the US Election.

Here is how they implemented this strategy:

- Create a new URL every day (to signal freshness)

- Apply LiveBlogPosting schema and continually make updates to that URL

- Ensure each update has its own dedicated timestamp

Below you can see some examples of URLs CNN posted during this event. Each day a new URL was posted with LiveBlogPosting schema attached:

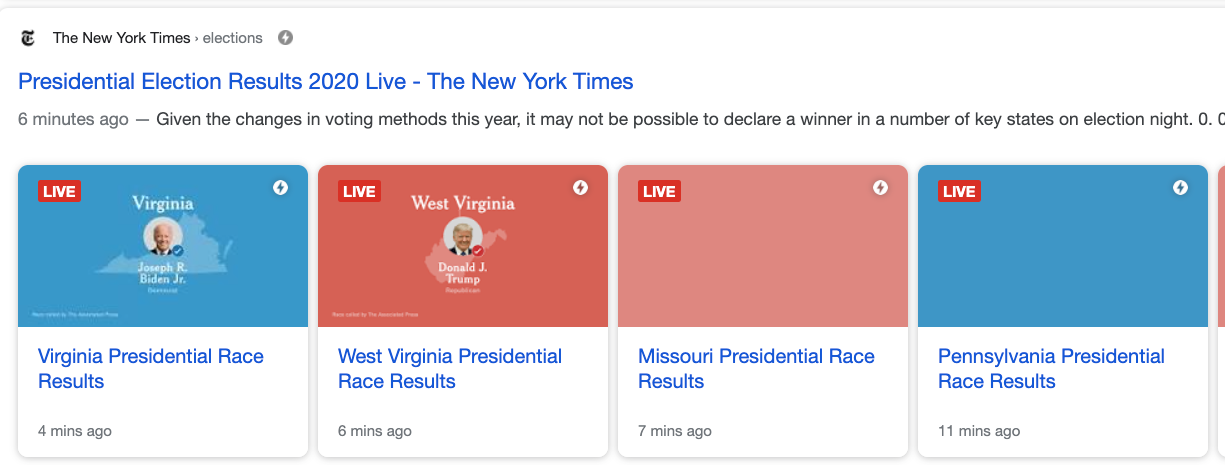

https://www.cnn.com/politics/l… another telling result for “us election” on November 4, 2020. We can see that The New York Times is ranking in the #2 position on mobile for the term. While the ranking page isn’t a live blog post, we can see underneath the result is an AMP carousel. Their strategy was to live blog each individual state’s results:

It’s clear that publishers are heavily utilizing this schema type for extremely competitive news articles that are based around big events. Oftentimes, we’re seeing this strategy result in prominent visibility in Top Stories and even the organic results.

How do you implement LiveBlogPosting schema?

So you have a big event that you want to optimize around and are interested in implementing LiveBlogPosting schema. What should you do?

1. Get whitelisted

The first thing you’ll need to do is get whitelisted by Google. If you have a Google representative that’s in contact with your organization, I recommend reaching out to them. There isn’t a lot of information out there on this and we can even see that Google has previously removed help documentation for it. However, the form to request access to the Live Coverage Pilot is still available.

This makes sense, as Google might not want news sites with questionable credibility to access this feature. This is another indication that this feature is potentially very powerful if Google wants to limit how many sites can utilize it.

2. Technical implementation

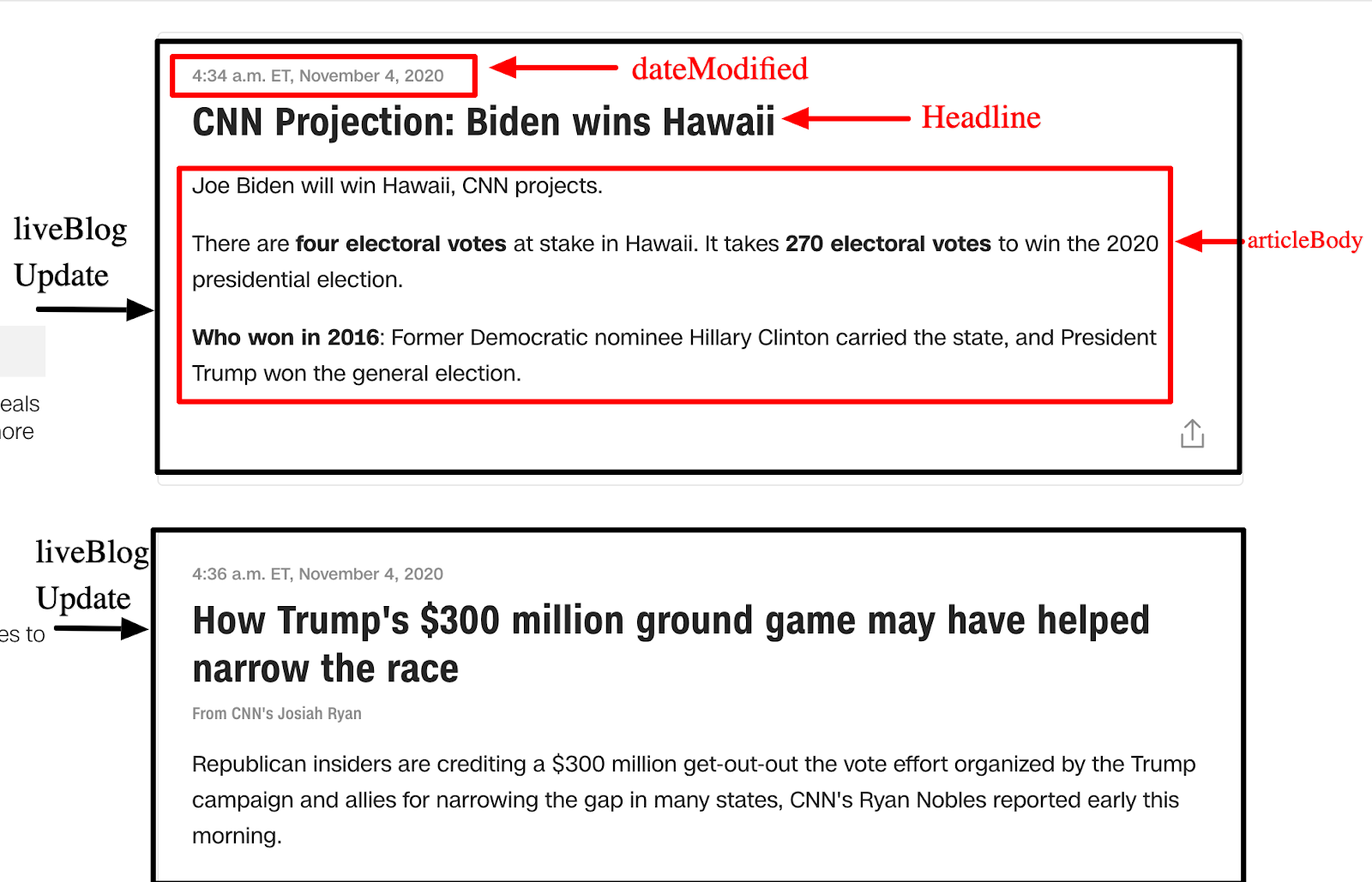

Next, with the help of a developer, you’ll need to implement LiveBlogPosting structured data on your site. There are several key properties you’ll need to include such as:

- coverageStartTime: When the live blog post begins

- coverageEndTime: When the live blog post ends

- liveBlogUpdate: A property that indicates an update to the live blog. This is perhaps the most important property:

- headline: The headline of the blog update

- articleBody: The full description of the blog update

- datePublished: The time when the update was originally posted

- dateModified: The time when the update was adjusted

To make this a little easier to conceptualize, below you can find an example of how CNN has implemented this on one of their live blogs. The example below features two “liveBlogUpdate” properties on their November 3, 2020 coverage of the election.

Case study

As I previously mentioned, many of these findings were discovered during research for a particular client who was interested in improving visibility for several large single-day events. Because of how agile the client is, they were actually able to get LiveBlogPosting structured data up and running on their site in a fairly short period of time. We then tested to see if this structured data would help improve visibility for very competitive “head” keywords during the day.

While we can’t share too much information about the particular wins we saw, we did see significant improvements in visibility for the competitive terms the live blog post was mapped to. When looking in Search Console, we can see lifts of between +200% and +600%+ improvements in YoY clicks and visibility for many of these terms. During our spot checks during the day, we often found the live blog post ranking in the 1-3 results (first carousel) in Top Stories. The implementation appeared to be a major success in improving visibility for this section of the SERPs.

Google vs. Twitter and the need for real-time updates

So the question then becomes, why would Google place so much emphasis on the LiveBlogPosting structured data type? Is it the fact that the page is likely going to have really in-depth content? Does it improve E-A-T in any way?

I would interpret that the success of this feature demonstrates one of the weaknesses of a search engine and how Google is trying to adjust accordingly. One of the primary issues with a search engine is that it’s much harder for it to be real-time. If “something” happens in the world, it’s going to take search engines a bit of time to deliver that information to users. The information not only needs to be published, but Google must then crawl, index, and rank that information.

However, by the time this happens, the news might already be readily available on platforms such as Twitter. One of the primary reasons that users might navigate away from Google to the Twitterverse is because users are seeking information that they want to know right now, and don’t feel like waiting 30 minutes to an hour for it to populate in Google News.

For instance, when I’m watching the Steelers and see one of our players have the misfortune of sustaining an injury, I don’t start to search Google hoping the answer will appear. Instead, I immediately jump to Twitter and start refreshing like crazy to see if a sports beat writer has posted any news about it.

What I believe Google is creating is a schema type that signals a page is in real-time. This gives Google the confidence to know that a trusted publisher has created a piece of content that should be crawled much more frequently and served to users, since the information is more likely to be up to date and accurate. By giving rich features and increased visibility to articles using this structured data, Google is further incentivizing the creation of real-time content that will retain searches on their platform.

This evidence also signals that sites indicating to search engines that content is fresh and regularly updated may be an increasingly important factor for the algorithm. When talking to Dan Hinckley, CTO of Go Fish Digital, he proposed that search engines might need to give preference to articles that have been updated more recently. Google might not be able to “trust” that older articles still have accurate information. Thus, ensuring content is updated may be important to a search engine’s confidence about the accuracy of the results.

Conclusion

You really never know what types of paths you’re going to go down as an SEO, and this was by far one of the most interesting ones during my time in the industry. Through researching just this one example, we not only figured out a piece of the Top Stories algorithm, but also gained insights into the future of the algorithm.

It’s entirely possible that Google will continue to incentivize and reward “real-time” content in an effort to better compete with platforms such as Twitter. I’ll be very interested to see any new research that’s done on LiveBlogPosting schema, or Google’s continual preference towards updated content.

Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don’t have time to hunt down but want to read!

![]()

Recent Comments